In an era where artificial intelligence development moves at lightning speed, Nvidia has cemented its position as the undisputed leader in AI computing hardware. The company now generates an astonishing $2,300 in profit every second, powered primarily by its data center business which has grown so substantially that even its networking hardware outperforms its traditional gaming GPU revenue stream.

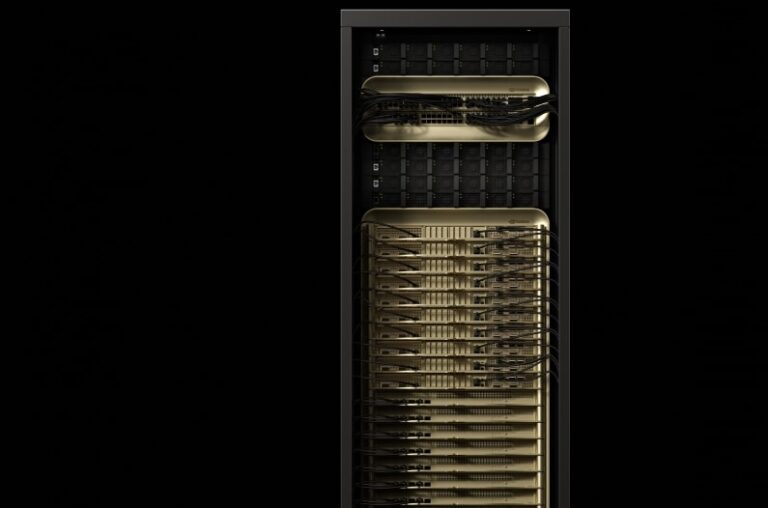

Blackwell Ultra GB300 and Vera Rubin are now at the forefront of Nvidia’s AI efforts. Image credit: Nvidia

At the recent GPU Technology Conference (GTC) 2025 in San Jose, Nvidia CEO Jensen Huang unveiled the company’s ambitious roadmap for next-generation AI chips designed to maintain and extend this commanding market position.

Blackwell Ultra GB300: Immediate Power Boost

Coming in the second half of 2025, the Blackwell Ultra GB300 represents Nvidia’s near-term strategy to satisfy the growing demand for AI computing resources. While not built on an entirely new architecture as initially anticipated, the Blackwell Ultra delivers meaningful improvements over its predecessors.

Technical Specifications and Capabilities

The Blackwell Ultra maintains the same 20 petaflops of AI performance as the original Blackwell chip but introduces a significant memory upgrade—288GB of HBM3e memory compared to 192GB in the standard Blackwell. When deployed in a Blackwell Ultra DGX GB300 “Superpod” cluster configuration, the system delivers:

- 288 CPUs

- 576 GPUs

- 11.5 exaflops of FP4 computing

- 300TB of memory (up from 240TB)

Compared to the H100 chips that fueled Nvidia’s initial AI boom in 2022, the Blackwell Ultra offers:

- 1.5x the FP4 inference capability

- Dramatically improved “AI reasoning” performance

- 10x token processing speed (1,000 tokens per second)

This enables practical applications like running an interactive copy of DeepSeek-R1 671B that provides answers in just 10 seconds, compared to 1.5 minutes on H100 systems.

Improving and Facilitating Access to Powerful AI Hardware

In a notable shift, Nvidia will offer single-chip Blackwell Ultra solutions through a desktop computer called the DGX Station. This compact powerhouse features:

- A single GB300 Blackwell Ultra chip

- 784GB of unified system memory

- Built-in 800Gbps Nvidia networking

- 20 petaflops of AI performance

Major hardware partners including Asus, Dell, HP, Boxx, Lambda, and Supermicro will manufacture versions of this desktop, potentially bringing enterprise-grade AI computing capabilities to a broader range of organizations.

For those requiring more substantial computing resources, Nvidia will offer the GB300 NVL72 single rack configuration with:

- 1.1 exaflops of FP4 computing

- 20TB of HBM memory

- 40TB of “fast memory”

- 130TB/sec of NVLink bandwidth

- 14.4 TB/sec networking capabilities

Vera Rubin: The Next Architectural Leap

Looking further ahead, Nvidia revealed its next-generation architecture named after pioneering astronomer Vera Rubin. Scheduled for release in the second half of 2026, Vera Rubin represents a significant advancement in AI chip design.

Revolutionary Performance Gains

The Vera Rubin architecture will deliver:

- 50 petaflops of FP4 computing (2.5x increase over Blackwell)

- Custom Nvidia-designed CPU called “Vera”

- Substantially improved AI inferencing and training capabilities

- Significantly more memory bandwidth

The Vera CPU itself offers approximately twice the performance of the CPU used in Nvidia’s current Grace Blackwell GPU, creating a powerful synergy between processing components.

Vera Rubin Ultra: Pushing Boundaries Further

Following the Vera Rubin release, Nvidia plans to launch Rubin Ultra in the second half of 2027. This ultra-high-performance variant effectively combines multiple Rubin GPUs into a single package:

- 100 petaflops of FP4 computing

- Nearly quadruple the memory at 1TB

- Four GPUs integrated into a single package

A full NVL576 rack of Rubin Ultra will provide an astonishing 15 exaflops of FP4 inference and 5 exaflops of FP8 training—representing a 14x performance improvement over the Blackwell Ultra rack shipping this year.

The Road to Feynman: Nvidia’s Long-Term Vision

During his GTC keynote, Jensen Huang briefly mentioned Nvidia’s plans beyond Vera Rubin. The company’s 2028 architecture will be named “Feynman,” presumably after the renowned theoretical physicist Richard Feynman. While specific details remain scarce, Huang confirmed that Feynman will incorporate the Vera CPU and continue Nvidia’s trajectory of exponential performance improvements.

Market Impact and Industry Demand

Nvidia’s aggressive chip development roadmap comes at a time when AI computing demand continues to surge. Huang emphasized that the industry requires “100 times more than we thought we needed this time last year” to keep pace with advancements in artificial intelligence.

The market has responded enthusiastically to Nvidia’s innovations. The company has already shipped $11 billion worth of Blackwell revenue, with the top four buyers alone purchasing 1.8 million Blackwell chips so far in 2025—clear evidence of the tremendous appetite for high-performance AI computing hardware.

The Expanding AI Computing Portfolio

As AI models grow larger and more sophisticated, the need for specialized computing hardware becomes increasingly critical. Nvidia’s approach—combining GPU acceleration, custom CPUs, massive memory bandwidth, and high-speed interconnects—represents a comprehensive strategy to address the multifaceted challenges of modern AI computing.

By establishing a clear product roadmap extending through 2028, Nvidia signals its commitment to maintaining leadership in this rapidly evolving field. The progression from Blackwell Ultra to Vera Rubin to Feynman illustrates not only technological advancement but also Nvidia’s understanding that consistent innovation is essential to support the expanding horizons of artificial intelligence.

For organizations building AI infrastructure today, Nvidia’s announcements provide valuable insight into the future capabilities that will shape AI development for years to come. As these next-generation chips reach the market, we can expect to see corresponding advancements in AI applications across sectors ranging from healthcare and scientific research to finance and creative industries.

If you are interested in this topic, we suggest you check our articles:

- Nvidia’s New Era: Personal Supercomputers Are Coming To You

- AI-Powered Supercomputers: Advancing Technology and Innovation

Sources: TechCrunch, TheVerge

Written by Alius Noreika